Echelon is a state of the art, massively parallel subsurface flow simulator built from its very inception to harness the power of GPUs

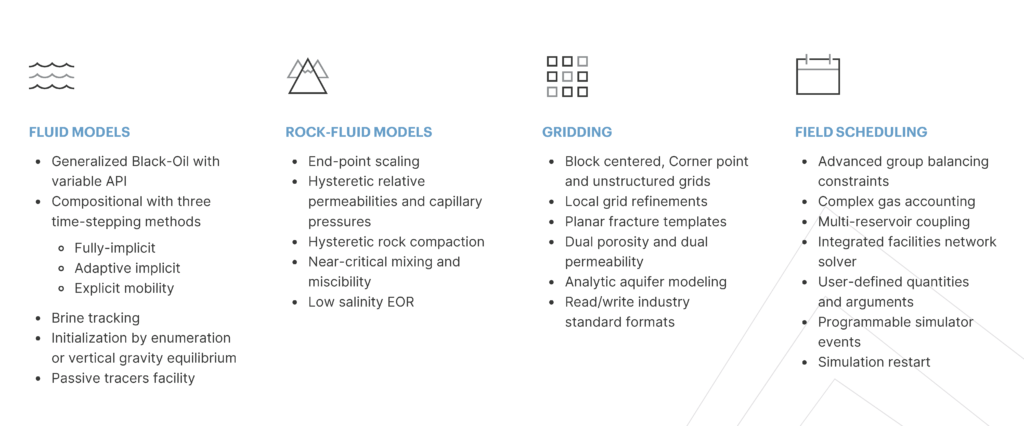

ECHELON has been under development by Stone Ridge Technology since 2012 and in joint partnership with Eni S.p.a since 2018. It is a state-of-the-art, massively parallel sub-surface flow simulator built from its very inception to harness the power of GPUs. Its key differentiators over competing simulators are its exceptional performance, scalability, and reduced hardware requirements. It runs on one or multiple GPUs which can be in a workstation, a server, on-premise cluster, or cloud and is available under Windows and Linux. ECHELON runs black oil and compositional models, includes support for complex field management, reservoir coupling, surface networks, and much more.

Designed for GPU

SRT has designed and developed ECHELON from inception to run optimally on massively parallel GPUs.

This is one of the reasons it is so fast compared to its competitors. Stone Ridge Technology realized the potential of GPUs very early in their introduction to general-purpose computing.

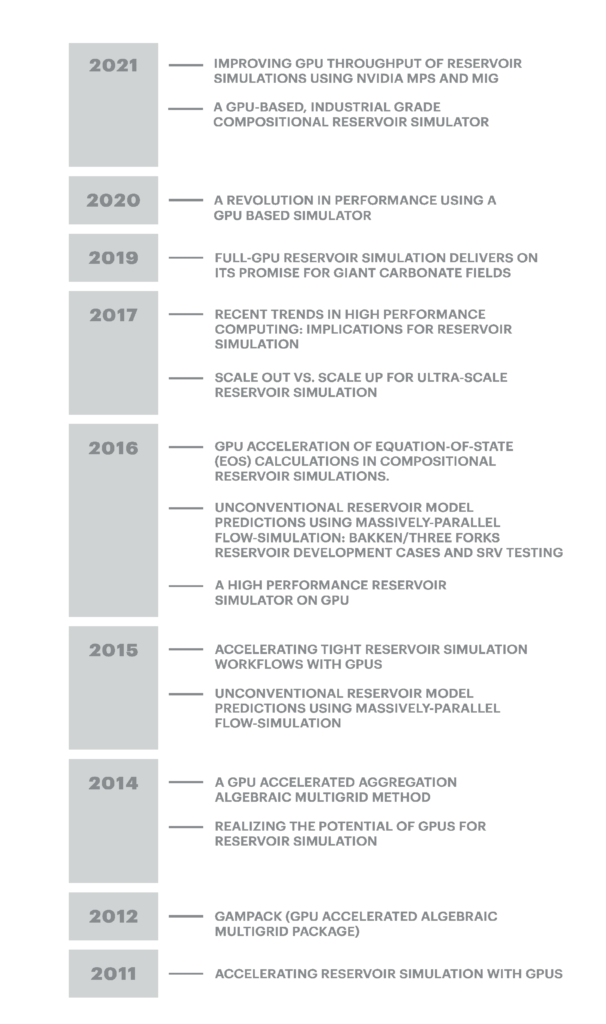

Our publications and talks begin back in 2012 and are marked by seminal papers highlighting the power of this platform for reservoir simulation (Figure 1).

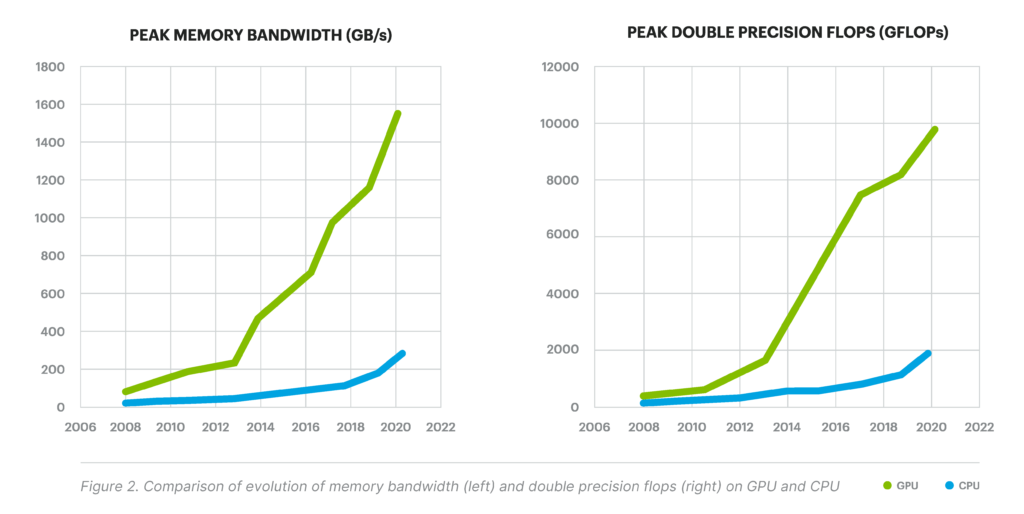

On a chip-to-chip basis comparing a state-of-the-art GPU with a state-of-the-art CPU, GPUs have consistently demonstrated 10x greater memory bandwidth and FLOPS capability.

That is, the speed with which it can move data into computing elements and the speed at which calculations can take place. Figure 2 on the opposing page plots both memory bandwidth and FLOPs for GPU and CPU over a period spanning 12 years.

Evolution of HPC

You should view the emergence of GPUs as computing platforms in the context set by the evolution of high-performance computing (HPC). HPC has evolved continuously over the past 40 years to offer platforms with progressively more parallelism (Figure 3).

Three epochs broadly categorized this evolution. Beginning with the serial epoch marked by single-core CPUs of the 1990s, progressing to the parallel epoch marked by multi-core CPUs in the mid-2000’s and arriving currently in the massively parallel epoch marked by today’s massively parallel GPUs with tens of thousands of cores. The shift towards increased parallelism has pushed the burden of extracting performance onto the programmer. To make use of GPUs, developers need to rethink and redesign their software to expose fine-grain parallelism.

ECHELON is the only commercial code base that SRT developed from inception to operate on massively parallel GPU platforms. Its demonstrated performance has been so startling that some of its competitors have now reluctantly taken up the difficult task of porting their mature CPU codebase to GPU, a non-trivial effort that yields suboptimal results.

Performance Scaling

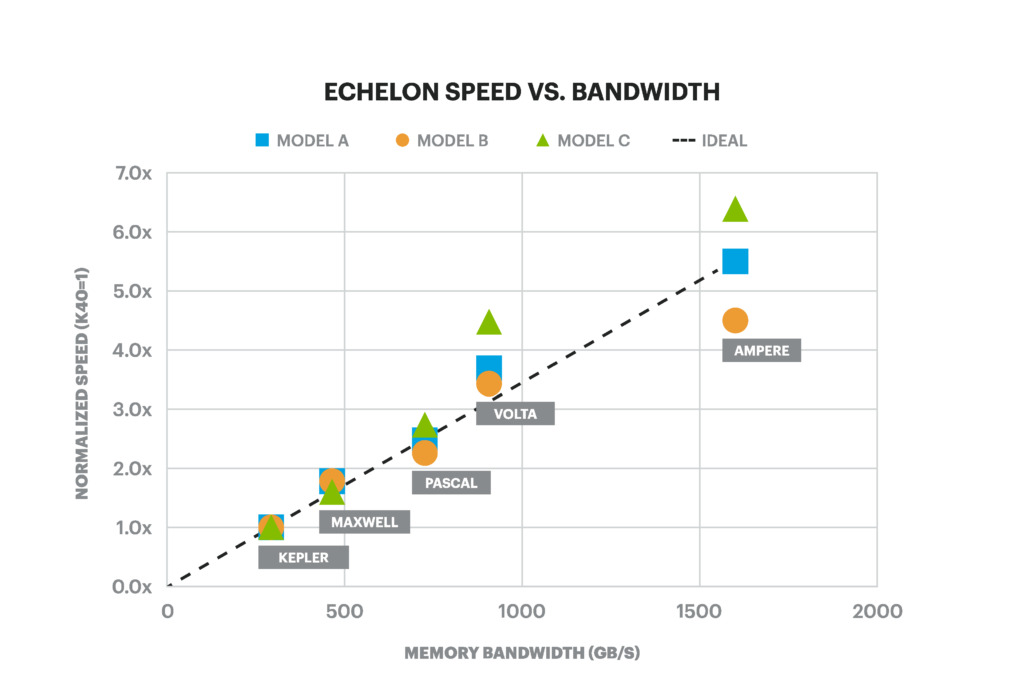

Because SRT optimally developed ECHELON for GPUs, its performance has scaled linearly with the capabilities of each new hardware generation. Figure 4 shows it. In the seven years from 2013 to 2020, ECHELON has become more than 5x faster from hardware advances alone. The message is clear that GPU performance has been advancing at a greater pace than CPUs for over a decade. ECHELON is uniquely poised to capitalize on this post-Moore’s law technology curve driving GPUs towards future advances.

Robust and Accurate

ECHELON is a full-featured, and robust code that has been thoroughly tested by Eni and our other clients and industry partners. ECHELON incorporates rigorous industry-standard convergence criteria in the solution of the governing equations and, equally important, adherence to results produced by legacy simulators. SRT has upheld the highest standards for accuracy, robustness, and reproducibility from the beginning of the development process. Figure 5 shows water and gas rate of production. It is from a representative well for a 3.5M cell giant carbonate model with 43 years of forecast.